Logistic Regression

Logistic Regression¶

- A linear ML model used often for classification tasks.

| Item | Description |

|---|---|

| Activation Function | Logistic function |

| Loss Function | CXE, Cross Entropy |

The logistic function:

It is the probability score a model produces for the likelihood that the sample belongs to a certain class.

Vectorized Logistic Regression¶

- \(N\) data points: \(x_{i}\) for \(i = 1,2,....N\)

- Each data point has \(D\) dimensions: \(x_{i} = [x_{i1} x_{i2} ... x_{iD}] \in \Re^{1xD}\)

- Each data point has a corresponding class label: \(y_{i} = 0 or 1\)

Vectorized equation:

When the result (probability) is \(> 0.5\), class 1 should be assigned. When the result (probability) is \(\leq 0.5\), class 0 should be assigned.

Minimize CXE (Cross Entropy Loss) over the training set:

Cross Entropy Loss¶

- \(y\) is a vector of predictions;

- \(l\) is the vector of true labels;

- Can be optimized with gradient descent.

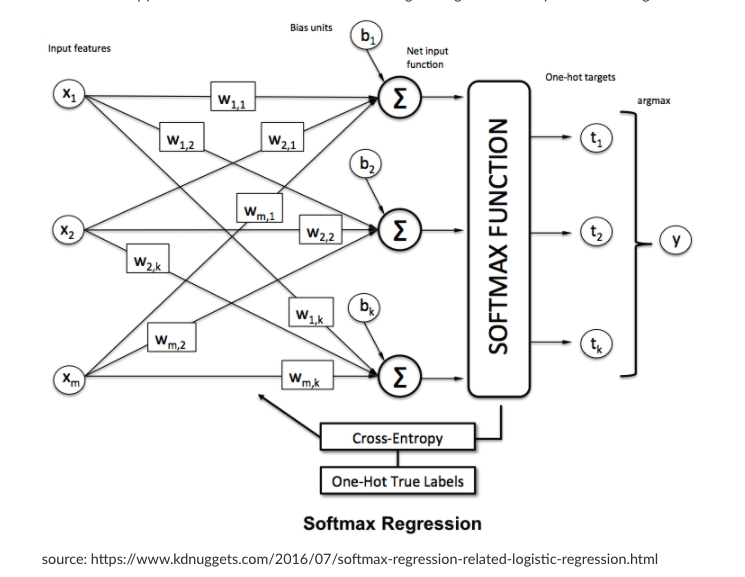

Softmax Regression¶

The multiple probabilities from 10 logistic regression models cannot give a probability distribution across classes. Instead, we use softmax.

The softmax activation function is applied for a vector to normalize into a probability distribution.

Softmax turns the scores from the different models into a probability distribution across ALL the models.

An example of how to code SoftMax in Python:

1 2 3 4 5 | |

Vectorized SoftMax¶

- \(N\) data points: \(x_{i}\) for \(i = 1,2,....,N\)

- Each data point has \(D\) dimensions: \(x_{i} = [x_{i1} x_{i2} ... x_{iD}] \in \Re^{1 x D}\)

If we have \(c = 1,2,....,C\) classes:

- Each data point has a class label: \(y_{i} = 1,2,... or C\)

- One-Hot-Vector representation; label 2 can, for ex., be represented as a vector, \(y_{i} = [0 1 ... 0] \in \mathbb{R}^{1xC}\)

- \(W \in \mathbb{R}^{DxC} , b \in \mathbb{R}^{1xC}\) are parameters to learn(?)

- also, \(o_{i} \in \mathbb{R}^{1xC}\) is a **prediction of ** \(y_{i}\)

One-Hot Encoding¶

OHE is used to transform the true labels of the dataset into multiple binary vectors for training individual models. If there are 10 classes, then every label is converted into a vector of length 10. This vector has 0s everywhere except one position which corresponds to the label to which the sample belongs. That position has 1 denoting that the particular sample belongs to that class.